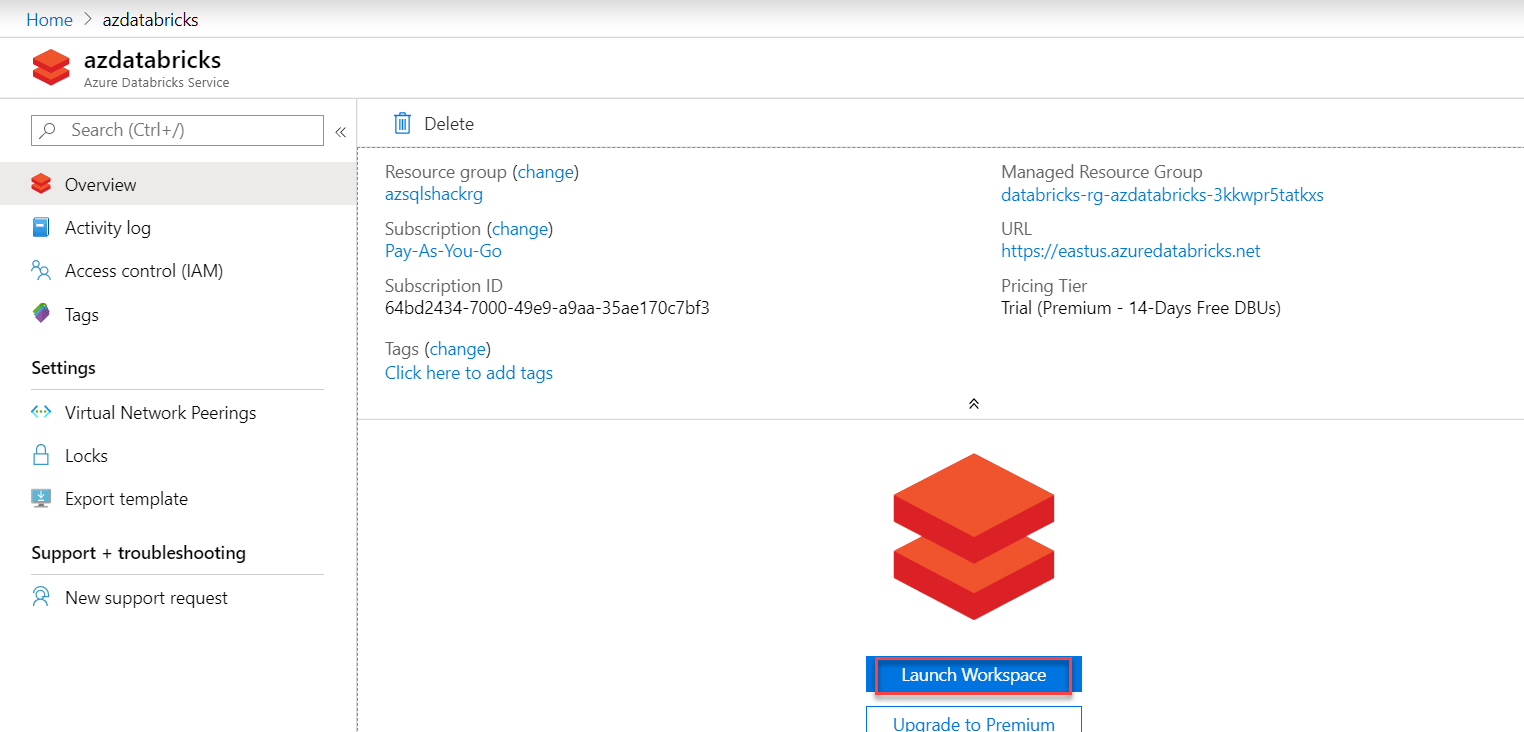

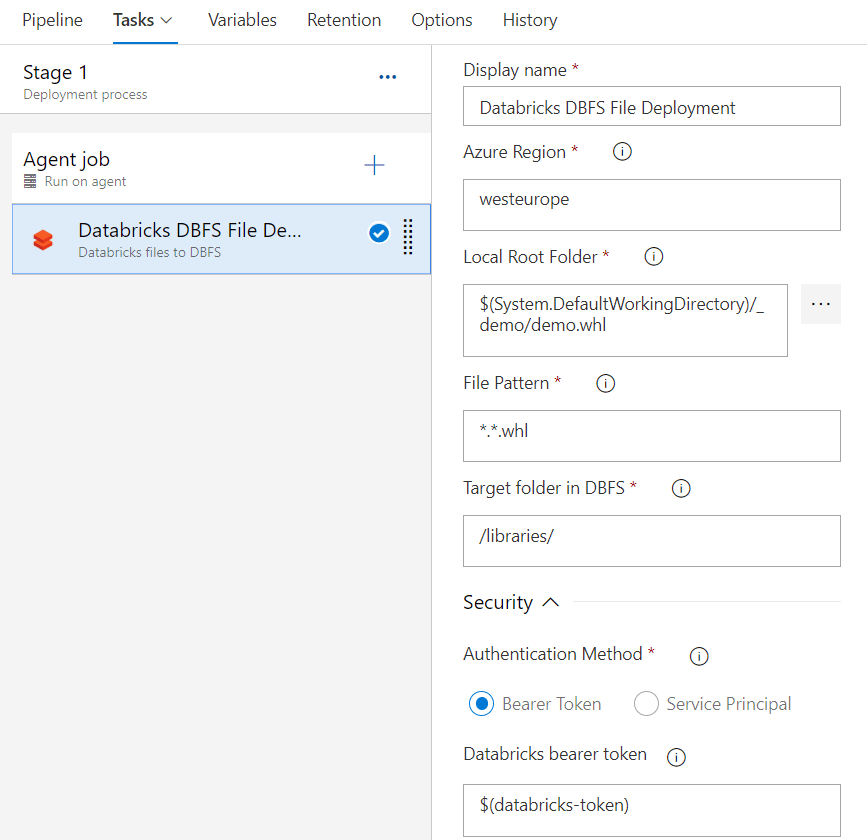

At this point, I was beginning to suspect that it was going to make sense to use PySpark instead of Pandas for the data processing. However, we still ran into problems when trying to convert back into a spark array to write back out to the file system around the types of the various columns. Without this setting, each row must be individually pickled (serialized) and converted to Pandas which is extremely inefficient. This allows the data to be converted into Arrow format, which can then be converted via zero allocation pyarrow to convert into a Pandas dataframe. This setting enables the use of the Apache Arrow data format. In order to get a pandas dataframe you can use: pandas_df = df.toPandas()Īlongside the setting: (".enabled", "true") options(header='true', inferSchema='true', though the pandas read function does work in Databricks, we found that it does not work correctly with external storage. The file could then be loaded into a Spark dataframe via: df = sqlContext ("fs.azure.createRemoteFileSystemDuringInitialization", "false") ("fs.azure.createRemoteFileSystemDuringInitialization", "true")ĭbutils.fs.ls("abfss://.net/") In the notebook, the secret can then be used to connect to ADLS using the following configuration: ("fs.", (scope = "", key = "DataLakeStore")) Initial-manage-principal must be set to "users" when using a non-premium-tier account as this is the only allowed scope for secrets.ĭatabricks secrets put -scope -key DataLakeStore Create a scope for that secret using:ĭatabricks secrets create-scope -scope -initial-manage-principal users To create the secret use the command databricks configure -token, and enter your personal access token when prompted.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed